You remember the room. The slides were clean. The demo ran perfectly. When the Chief Data Officer leaned forward and the Board offered more than just polite applause, it felt like the Agentic Era had finally arrived at your doorstep.

That was eight months ago.

Since then, the security review has been in progress. The data team said they'd connect the live pipeline after the quarter. Legal has questions nobody answered. Your PoC is suffocating in pilot purgatory AI, a state where promising models fail to transition into live environments due to a massive implementation gap.

You're not alone. S&P Global's 2025 research found that 42% of companies abandoned their AI initiatives last year, double the rate of the year prior. On average, nearly 46% of all enterprise PoCs are scrapped before they ever touch a live production environment.

Why enterprise AI projects fail after PoC usually comes down to one thing: the AI didn't fail. The bridge failed.

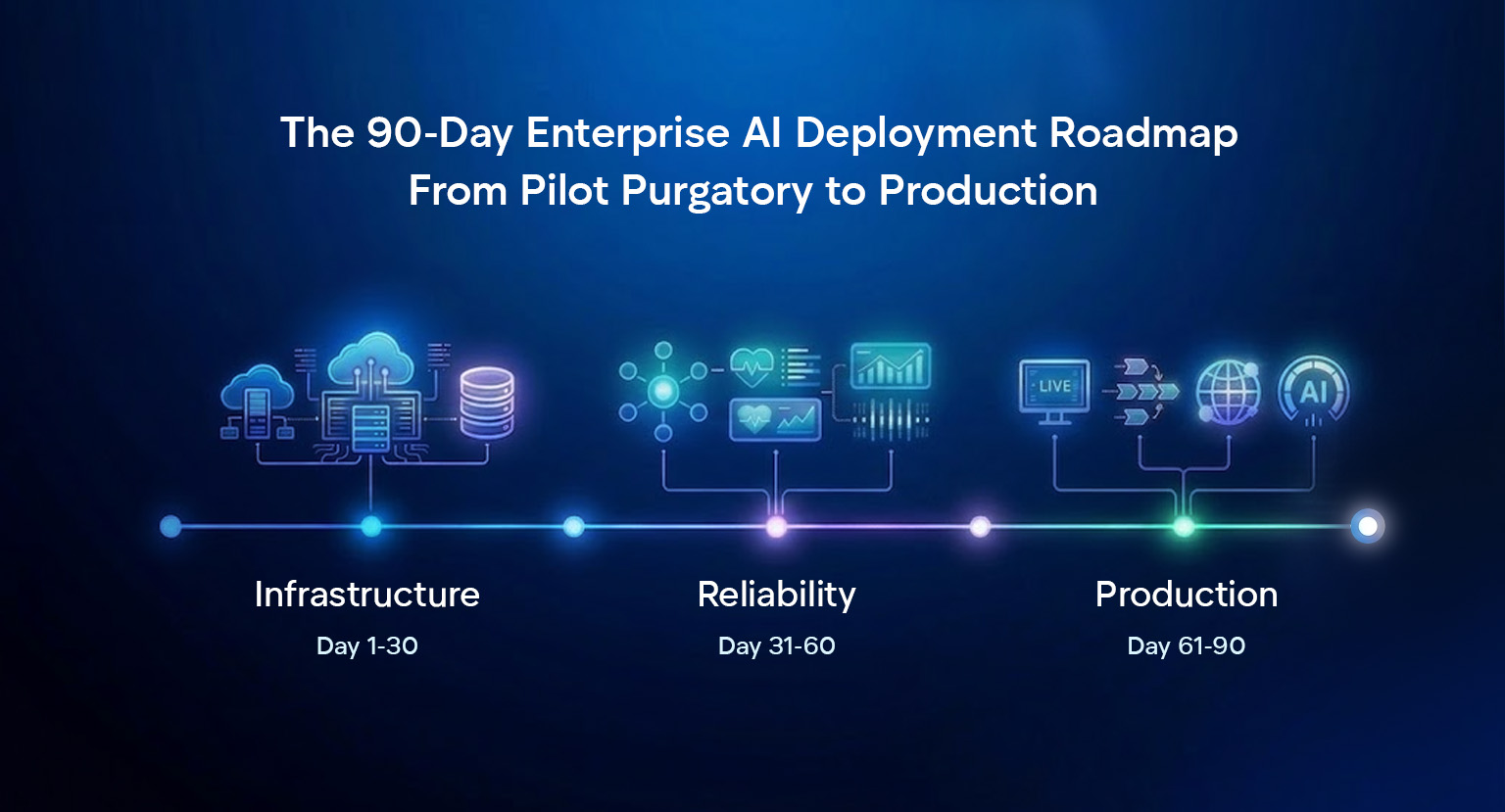

The PoC was never the hard part. Producing a demo on curated data in a controlled sandbox is easy. What kills enterprise AI is the 90-day journey from 'it works' to 'it's operational'.

That's where this playbook comes in: it outlines the formula used by the 30% of companies that actually ship AI. (McKinsey)

To understand the systemic hurdles that prevent projects from moving forward, read:: Why Enterprise AI Pilot Projects Fail to Scale

What this roadmap covers

Enterprise AI Production Readiness Audit: What to Fix Before Day 1

Before the 90-day clock starts, you must bridge the gap between a lab experiment and a production system. A PoC proves an AI can work under ideal conditions; AI production readiness means making it work reliably within a messy, live ecosystem.

Before Day 1, perform a brutal audit of your foundation:

- Does the AI connect to live enterprise systems (CRM/ERP) rather than curated static data?

- Is there a named business owner outside the innovation team accountable for long-term adoption?

- Has security and compliance formally reviewed the architecture to prevent a governance wall at Day 60?

- What is the specific error rate at which the AI is pulled and the manual rollback plan begins?

If the answer is 'No' to any of these, that isn't a reason to stop — it's a reason to start the 90-day journey with the right Logic Gate Architecture in place.

Infrastructure Hardening & AI Stakeholder Alignment (Days 1–30)

At this stage, every instinct points toward feature expansion and user growth. In the enterprise, however, momentum is often a distraction. The 30% of firms that actually reach production do something counterintuitive: they go deeper before they go wider.

Phase 1 is not about building new things — it's about making your pilot production-worthy. This is the invisible work: infrastructure hardening, data pipeline transitions, and security onboarding. None of it shows up in a demo, but all of it determines whether Days 31–90 succeed or collapse.

1. The Continuous Logic Pipeline

Production requires a fundamental shift from static datasets to a Continuous Logic Pipeline. This is the first step in a professional MLOps implementation. For AI deployment on legacy systems, choose your integrations based on your existing architecture:

- Wrap: Build an API layer around the legacy system that feeds clean, structured data to the AI without touching the core.

- Connect: Sync legacy data to a modern warehouse (Snowflake/BigQuery). The standard for analytics-heavy use cases.

- Augment: Deploy the AI alongside the ERP with a middleware layer that intercepts and enriches specific data flows in real time.

Don't choose the most impressive pattern. Choose the one your current team can actually maintain at 3 AM.

For a deeper look at bridging the gap between old infrastructure and new intelligence, read: Legacy System Modernisation with AI

2. The Security Perimeter

In a regulated environment, security is not a checkbox at the end. It is a parallel workstream from Day 1. You must transition your AI from a standalone tool to a Secured Enterprise Endpoint.

In the first 30 days, lock down:

- Data Residency & RBAC: Which data does the AI touch, where does it live, who can access model outputs, and what happens to that data after inference?

- Endpoint Authentication: Every AI model once deployed must be an authenticated, rate-limited API endpoint.

- Governance Documentation: In regulated industries — loan approvals, patient routing, procurement workflows — start building the explainability trails now. If the AI affects a decision, you need a legal-grade audit trail.

3. Stakeholder Alignment & AI Board Approval Roadmap

By Day 30, you need a single-page document for leadership. This is not a status update. It is a Go/No-Go Decision Framework. The Board needs to see three unglamorous metrics before they authorise Phase 2 investment:

- Data Readiness Score: What percentage of the live data your AI needs is accessible, clean, and flowing through the new pipeline? Anything below 80% is a No-Go.

- Security Clearance Status: Has the security team formally acknowledged the project and begun their review? A signed scope-of-review document is the minimum.

- Operational Commitment: Is there a named person from a business unit who has formally accepted operational ownership of the post-launch system?

Phase 1 is not about the AI. It's about the organisation's readiness to absorb the AI. The board needs to see that you understand the difference.

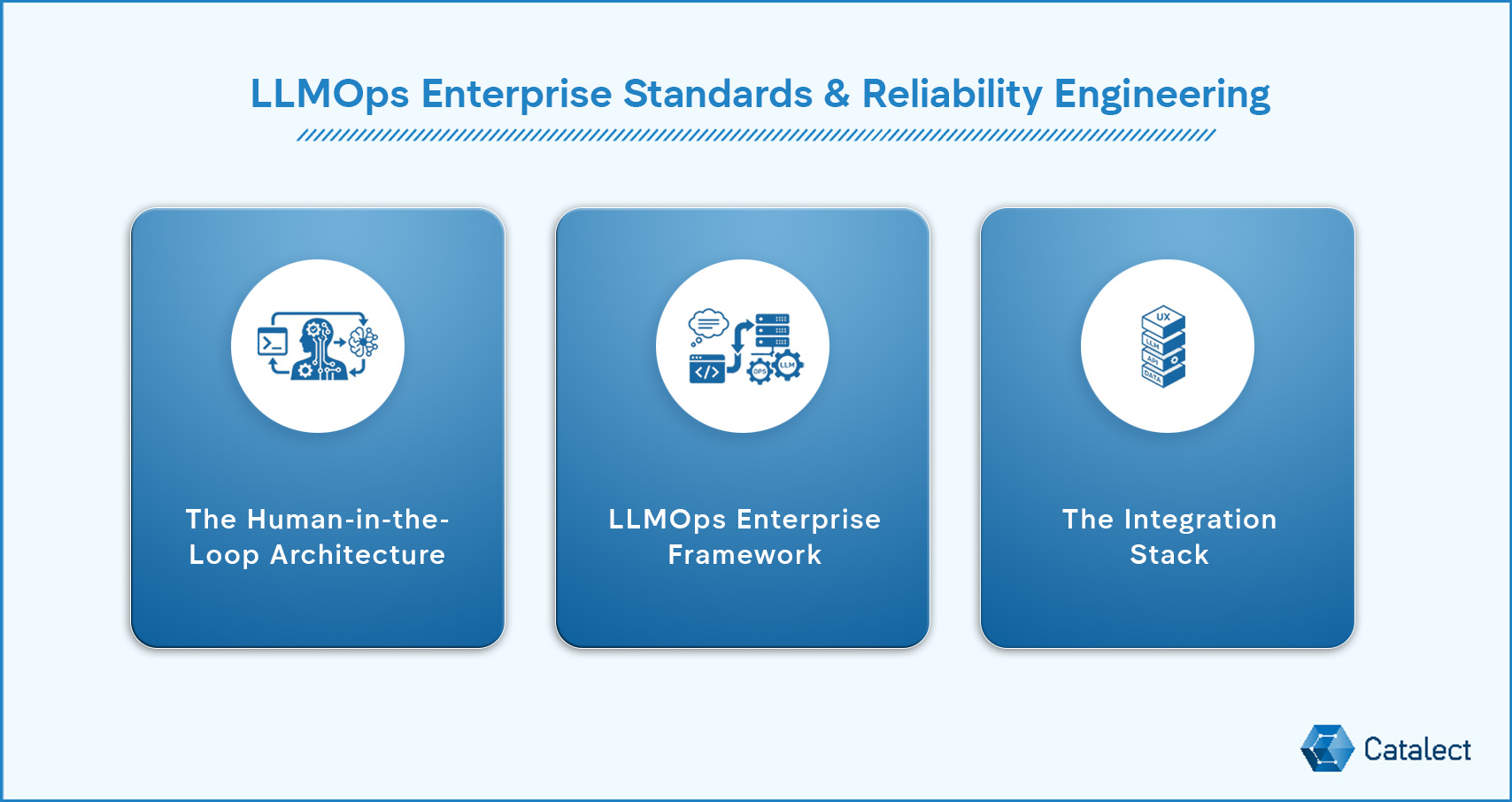

LLMOps Enterprise Standards & Reliability Engineering (Days 31–60)

Around Day 35, pilot fatigue typically sets in as initial demo excitement fades and technical complexity rises. This is the primary stalling point for scaling enterprise AI. Research confirms that 60% of total production effort lives in integration, reliability engineering, and AI governance for enterprise — none of which exist in a standard PoC.

The PM's objective here is expectation management: progress will look slow to outsiders, but this phase is where the system earns its production title.

Before connecting to live data streams, ensure your foundation is solid: Enterprise Guide to Data Preparation for Machine Learning

1. The Human-in-the-Loop Architecture

Before a single AI output touches a live business process, your team needs to answer one question: where does a human have to stay in the loop? Every enterprise AI deployment has three categories of decisions:

- Fully Automated: The AI acts without human review, for low-stakes, recoverable tasks (e.g., data formatting).

- Human-on-the-loop: AI acts, but a human can override the decision, for medium-stakes decisions where latency matters but oversight is still required.

- Human-in-the-loop: The AI recommends; a human approves. Mandatory for high-stakes decisions (financial, legal, or medical).

Map every decision the AI makes to one of these three risk profiles. This becomes the risk management evidence your Legal team requires.

To go deeper into designing these safety gates, read: Why Your AI Strategy Needs a Human-in-the-Loop Blueprint

2. LLMOps Enterprise Framework: Prompts Are Engineering Artifacts

In production, prompts are not informal iterations. They are first-class engineering artifacts. Treating prompts as chat history is a systemic risk. To ensure reliability, you must transition to an LLMOps enterprise framework:

- Version Control: Log exactly which prompt version generated every output for auditability.

- Rollback Capability: Ensure you can revert to a gold-standard prompt in minutes if performance degrades.

- Change Management: Treat prompt updates like source code. Require peer reviews and staging tests before merging to production.

Assign a Prompt Governor. Whether it is an AI Lead or a Business Unit head, someone must own the governance of the prompt library to prevent uncontrolled production variables.

3. The Integration Stack

Avoid the trap of building custom connectors for every legacy system. Prioritise your MLOps implementation using a 2-axis framework: business value versus integration complexity.

- Tier 1, Build for launch: High value, lower complexity. Your primary CRM, main communication tool (Slack, Teams), and the system that generates the AI's primary input data.

- Tier 2, Build in Phase 3: High value, higher complexity. ERP core, legacy databases requiring custom connectors, third-party vendor APIs.

- Tier 3, Defer post-launch: Lower value, any complexity. Nice-to-have connections that expand scope without materially changing the AI's core function.

The build vs. buy decision lives here. Do not let your team build an orchestration layer from scratch if a middleware solution can solve 80% of the problem today.

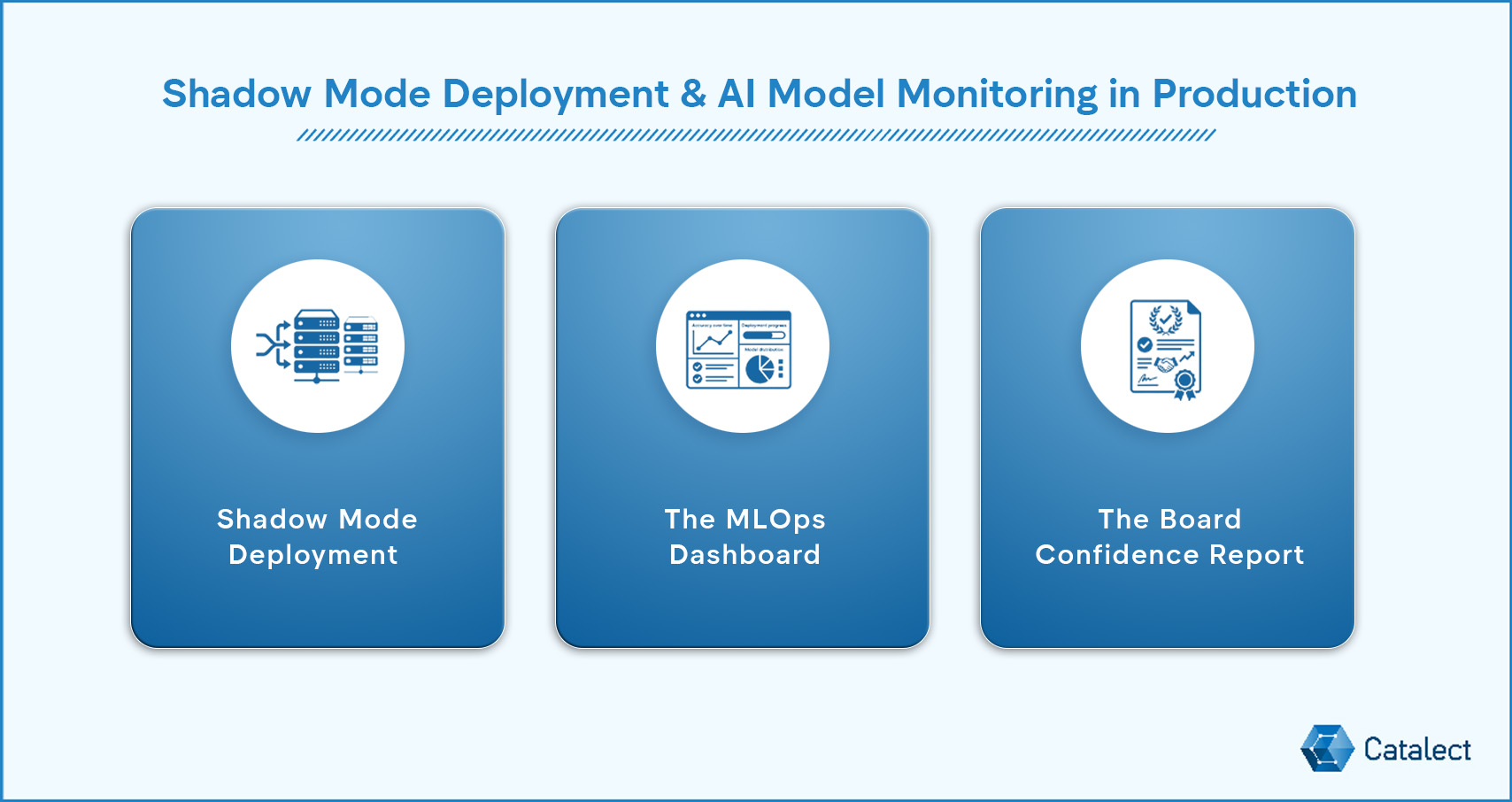

Shadow Mode Deployment & AI Model Monitoring in Production (Days 61–90)

Going live doesn't mean going wide. The 30% of enterprise AI deployments that succeed share one trait: they scale incrementally. Phase 3 is a deliberate, evidence-gathering process designed to prove the system works in a live operational environment before establishing full organisational dependency.

1. Shadow Mode Deployment

Shadow Mode is the most underutilised tool in the AI pilot-to-production journey. The AI runs in the background of live operations, processing real data without affecting the output. You capture the AI's decisions and compare them against your legacy process.

Why Shadow Mode is mandatory for production-ready AI:

- Real-World Stress: Tests how the model handles unfiltered production data and edge cases.

- Latency Under Load: Measures P95 inference times during actual operational peaks.

- Delta Analysis: Provides the side-by-side evidence needed for scaling enterprise AI by comparing AI performance vs. existing workflows.

Run Shadow Mode for a minimum of 14 days (30 for regulated industries). It is the only way to generate the hard evidence required for a full rollout.

2. The MLOps Dashboard — 4 Metrics That Determine Go/No-Go

Your engineering team will build a monitoring dashboard. Your job as PM is to know which four metrics on that dashboard determine whether the system stays live or gets pulled. Set these threshold values before go-live to establish your production SLA:

- Drift rate: How much is the model's output changing over time as production data flows through it?

- Inference latency (P95): Are the slowest 5% of responses still fast enough for the human workflow?

- Hallucination/Error Rate: What is the frequency of 'confident but incorrect' outputs?

- User override rate: In Human-in-the-Loop systems, how often are reviewers overriding the AI's recommendation? A high rate signals trust or calibration issues.