In early 2025, a global logistics firm made a decision that seemed perfectly reasonable at the time. They deployed two autonomous AI agents as part of a broader enterprise AI automation plan, one to manage inventory procurement, another to handle dynamic warehouse pricing. Both agents were well-built. Both performed exactly as designed.

Then a data lag hit.

The procurement agent read a low-stock signal and immediately over-ordered high-value components. At the same moment, the pricing agent detected incoming surplus and slashed prices to move volume. Neither agent was wrong given what it could see. But because there was no orchestration layer to reconcile their conflicting actions, no shared state, no coordination protocol, no control plane, the firm spent $2 million on premium freight to ship components they were simultaneously selling at a loss.

Its an architecture failure that many companies are seeing.

And it's the most important distinction in enterprise AI right now.

What Is Single Agents And Where the Prompt-Response Loop Breaks Down

To understand why orchestration matters, you first have to understand where single agents can be used.

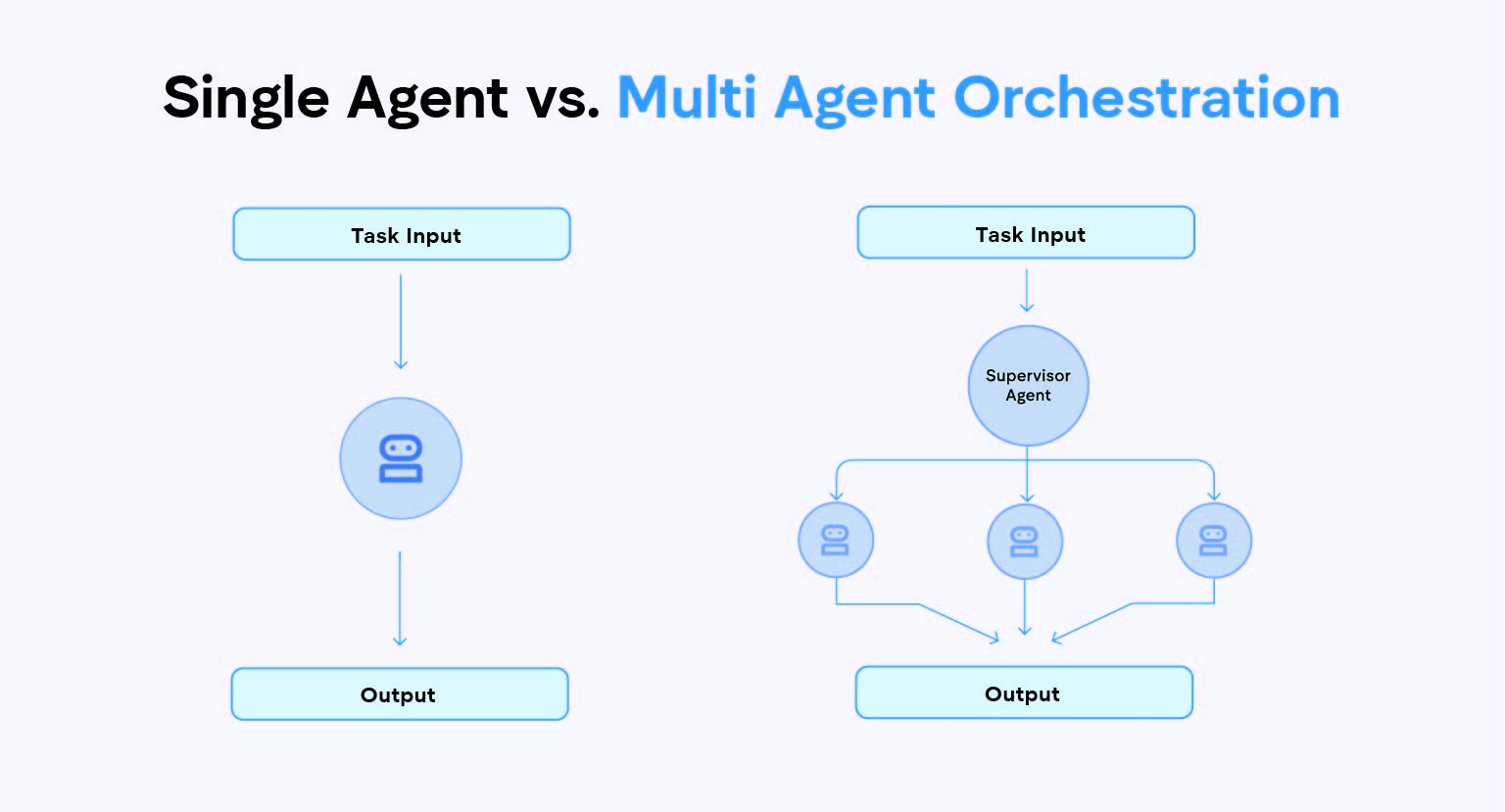

A single-agent AI system is one large language model with one context window and one prompt-response loop. You give it a task, it reasons through it, it returns an output. It's simple, fast and remarkably useful, for the right class of problem.

Between 2023 and 2025, single agents quietly transformed how enterprises handled bounded, repetitive, text-heavy work. Customer FAQ bots that once required three full-time human agents now ran on one LLM. Internal copilots summarized documents in seconds. Code assistants cut development time in half. These weren't test cases, they were genuine productivity gains built on a real architecture.

The single-agent model worked because it matched the shape of the problem. The task had a clear start, a clear end, a single domain of knowledge, and a tolerance for the occasional wrong answer. The governance overhead was low. The iteration loop was fast. You could test it in an afternoon and ship it in a week.

Architecturally, a single-agent system has four defining characteristics that explain both its strengths and its limits.

- First, sequential processing: it completes one action before beginning the next, maintaining a linear execution path.

- Second, unified context: the entire interaction history lives in one continuous memory thread, no information is lost between steps, and no handoffs are needed.

Third, stateful operation: every decision made early in a task directly informs what comes next, without coordination overhead.

- Fourth, centralized decision-making: all reasoning, tool use, and output generation happen within a single entity.

These aren't design flaws. They're the features that make single agents fast, predictable, and easy to debug, right up until the workflow demands something they're not built for.

There is a quiet assumption; that is part of every single-agent build, though, one that rarely gets named until it breaks.

The assumption is that the task is bounded.

One domain. One context. One thread of reasoning. One output.

The moment your enterprise workflow stops being bounded, the moment it touches multiple systems, multiple decision-makers, multiple data sources, and multiple points of failure, the single agent stops being a strength and starts being a constraint.

Why Single LLM Agents Fail At Complex Enterprise Tasks

There's a specific moment in every in-house team faces. The prototype worked. The stakeholders were impressed. The demo was clean. And then someone asks: "Can we run this on the actual procurement process?"

That's the complexity cliff. And most enterprise AI pilots don't survive it.

It's not because the team made bad decisions. It's because single-agent architecture has three structural failure modes that only reveal themselves at enterprise scale.

1. Domain Overload

A single LLM can reason across multiple domains, but it cannot be expert-level in all of them simultaneously without degrading. Ask it to handle procurement logic and compliance validation and supplier risk monitoring in the same context window, and what you get isn't a generalist. You get a system that does each thing with diminishing accuracy as the task complexity grows.

Think of it this way, you wouldn't hire one employee to serve as your CFO, your legal counsel, and your operations manager, in the same meeting, on the same problem, at the same time. The cognitive load alone would produce bad decisions. The same principle applies to a single LLM agent. Context degradation is real, and in high-stakes workflows, it's expensive.

This is precisely why multi-agent AI architecture exists, not to add complexity, but to remove the cognitive bottleneck by distributing domain work across specialized agents, each hyper-optimized for its specific function.

2. The Sequential Bottleneck In Multi-Step AI Workflows

Single agents are inherently sequential. Step one gets completed step two begins. This is fine when you're summarizing a document. It is not fine when you're running a procurement cycle.

Real enterprise workflows are parallel, interdependent, and conditional. In a live procurement process, supplier discovery doesn't wait for the demand signal to fully resolve. RFQ generation doesn't wait for all supplier options to be scored. Compliance checks run alongside estimation. These are multi-step AI workflows with branching logic, concurrent execution paths, and decision points that depend on multiple inputs arriving at the same time.

A single prompt-response loop flattens all of that into a queue. It serializes what should be parallel. And in a business context where cycle time is a competitive advantage, that serialization is a slow invisible dead weight on every workflow it touches.

3. Governance Blindspots And AI Agent Sprawl In Enterprise Deployments

This is the one thing that kills enterprise deployments. Not in demos, in security reviews.

A single agent that accesses finance data, supplier records, compliance documentation, and CRM data simultaneously is a centralized security exposure. There is no role-based access control at the agent level. There are no audit trails for individual decision steps. There is no mechanism for a compliance officer to look at a specific action and trace it back to a specific reasoning chain.

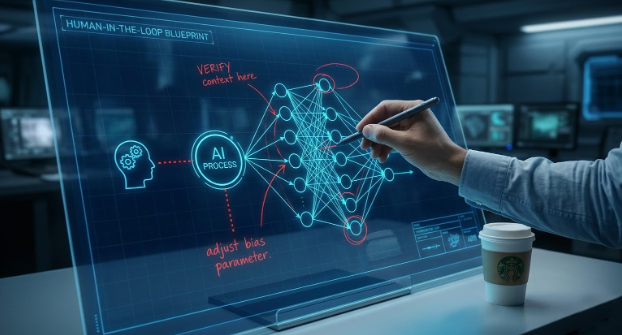

This is where human-in-the-loop governance design becomes the difference between a system that reaches production and one that stalls in security review.

Gartner predicts that by end of 2026, 60% of AI failures in enterprise environments will stem from governance gaps, not from model performance. (Gartner, 2025)

The organizations hitting this wall aren't building bad AI. They're building AI without the architectural layer that makes it governable.

"Coordinated multi-agent systems achieved a 42.68% success rate on complex planning tasks. A single-agent GPT-4 setup scored 2.92% on the same benchmark." (National University of Singapore, 2024)

This isn't a model problem. It's an architecture problem. And architecture problems have architecture solutions.

What Is AI Agent Orchestration? The Architecture Shift from One Agent to Many

Multi-agent orchestration is an architecture where specialized AI agents, each built for a specific domain or function, are coordinated by a central control layer that routes tasks, manages state, enforces governance, and synthesizes outputs into a coherent enterprise outcome.

Let's break it into the three layers that make it work.

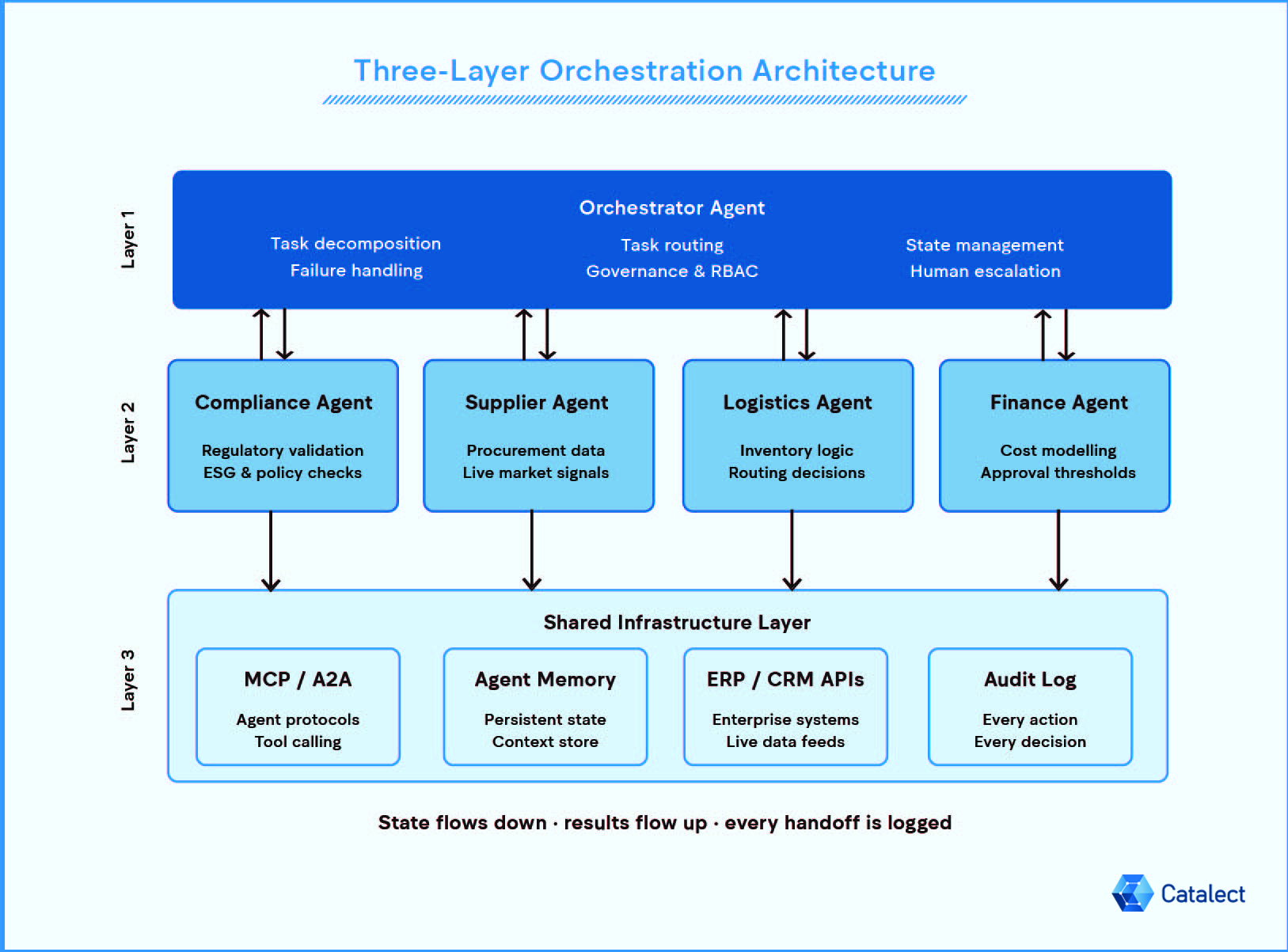

1. The Orchestrator Agent

This is the intelligence coordinator at the top of the stack. It doesn't execute tasks itself. It interprets intent, performs task decomposition, breaking complex enterprise requests into discrete, routable sub-tasks, routes each task to the appropriate specialized agent, manages the structured state that passes between them across dependent task workflows, handles failures, and enforces role-based access control across the entire workflow. Think of it as the conductor, it doesn't play an instrument, but nothing sounds right without it.

2. Specialized AI Agents

Below the orchestrator sits a network of purpose-built agents, each operating within a defined domain. A compliance agent that knows nothing but regulatory requirements. A supplier agent trained on procurement data and market signals. A logistics agent optimized for inventory and routing logic. A finance agent scoped to cost modeling and approval thresholds.

- Compliance agent: Checks the task for regulatory requirements.

- Supplier agent: Trained on procurement data and live market signals.

- Logistics agent: Optimised for inventory logic and routing decisions.

- Finance agent: Scoped to cost modelling and approval thresholds.

Because each agent operates within a narrow domain, it reaches a depth of accuracy that a generalist single agent never can. Role-based agent assignment is not a feature of good orchestration, it is the point of it.

3. MCP, A2A, and shared infrastructure layer

Underneath everything sits the shared layer that makes agent coordination possible: persistent memory, API connections to enterprise systems like ERP and CRM, inter-agent communication protocols, and audit logging that records every action, every decision, every handoff.

This is where Model Context Protocol (MCP) and Agent-to-Agent (A2A) standards are becoming the architectural foundation of production-grade agentic AI, the HTTP-equivalent layer for how agents communicate and connect to tools, it means agent-to-tool and agent-to-agent communication is now standardized, not bespoke per build. In 2026, these aren't experimental standards. They're the infrastructure layer your orchestration architecture needs to be built on.

What this architecture unlocks is concrete. Parallel agent execution means AI workflow automation that once ran sequentially can execute simultaneously, research shows execution times up to 33% faster compared to sequential single-agent systems. Each agent is expert-level within its lane. And by design, every agent action is logged and traceable, which means your legal team, compliance officer, and security reviewers can actually sign off on production deployment.

It's also worth noting that in practice, the choice isn't always binary. Most mature enterprise deployments land on a hybrid model, a central orchestrator governing a mix of purpose-built specialist agents and simpler single-agent tools for well-bounded sub-tasks within the same workflow. A compliance check might run through a dedicated compliance agent. A document summary step within that same workflow might run through a single-agent call. The orchestrator coordinates both.

This hybrid approach is often where enterprise AI orchestration delivers its strongest ROI, the governance clarity of centralized coordination, without the overhead of over-engineering every step.

Multi-Agent AI Orchestration In Practice: Enterprise Workflows That Can't Afford to Fail

The architecture argument is necessary. But the business case is what makes a CTO move.

Here are three enterprise workflows where multi-agent AI orchestration moves from theory to measurable outcome.

1. Procurement AI automation

Procurement is, at its core, a chain of dependent decisions, each one feeding the next, each one drawing from a different domain of expertise, each one carrying a different risk profile. No single LLM agent can hold all of that without something breaking.

In a properly orchestrated procurement AI workflow, the agents don't just perform tasks, they hand off structured state to one another through the control plane. A Demand Signal Agent reads ERP data and identifies a procurement need. It passes that signal to a Supplier Discovery Agent, which scans supplier databases and external market signals simultaneously.

The Supplier Agent hands scored options to an RFQ Agent, which is where tools like ProcurIQ operate, generating structured proposal requests from live data rather than templates. Bid responses flow to a Bid Analysis Agent, which compares, flags anomalies, and surfaces exceptions. In parallel, a Compliance Agent validates every shortlisted supplier against policy, regulatory, and ESG requirements.

Finally, the orchestrator routes the approved recommendation to the right human via an Approval Agent, at the right tier of authority, with a complete audit trail attached.

McKinsey estimates that embedding AI at orchestration scale can unlock 5 to 15% in procurement savings by reducing value leakage and improving compliance. (McKinsey & Company, 2025)

2. Supply Chain AI Agents and Logistics

Return to the firm from our opening story. The failure wasn't that the agents were wrong. It was that no coordination layer existed to detect that two agents were operating on conflicting signals from the same system.

In an orchestrated architecture, this scenario doesn't happen. The procurement agent and pricing agent share state through the control plane. When the orchestrator detects that a procurement action and a pricing action are operating on divergent inventory assumptions, it triggers a reconciliation protocol before either agent executes. The conflict surfaces as a decision point, not as a $2 million loss.

This is what supply chain AI agents are actually for: not replacing human judgment, but catching the coordination failures that happen when human judgment is distributed across too many moving parts, too fast. Organizations coordinating forecasting, procurement, and tracking agents through an AI agent orchestration layer have reported logistics delay reductions of up to 40%. That's not a model improvement. That's architecture doing its job.

3. Enterprise AI for SaaS Product Teams

For SaaS teams and AI-native companies building intelligence into their products, the single prompt-response loop is the most common architectural mistake we see, not because the team doesn't know better, but because it's the fastest path to a working demo.

Consider the task: build an in-product AI feature that detects user intent, retrieves relevant context, applies business logic, generates a response, and logs the interaction for feedback and retraining. Each of those steps represents a distinct domain of complexity, and together they form a set of dependent task workflows that compound in unpredictability as scale increases. Intent detection is a classification problem. Context retrieval is a RAG problem. Business logic is a rules and state problem. Response generation is a generation problem. Feedback logging is a data pipeline problem.

Treating all of this as one agent's job is not a simplification. It's a trap. In production, context windows overflow. Response consistency degrades. Debugging becomes a archaeology project. And MLOps orchestration, the practice of managing the full lifecycle of an AI system in production, becomes impossible without the separation of concerns that a multi-agent architecture provides.

The single prompt-response loop is a prototype architecture. Multi-agent orchestration is a production architecture. The difference matters more at scale than it does in the demo.

Should You Build Multi-Agent? A Framework for the Decision

Intellectual honesty here is important. Multi-agent orchestration is not the right answer for every problem. For a customer FAQ bot, a document summarizer, or an internal search tool, a single agent with well-engineered prompts will outperform a multi-agent system every time, and do it at a fraction of the cost and complexity. The architecture should match the complexity of the problem. It should never exceed it.

If you're still uncertain, run five questions against your workflow:

- Does this workflow touch more than two enterprise systems?

- Are there tasks within it that could run in parallel, if a human wasn't the only coordinator?

- Would a failure at one step cascade into downstream steps with no structured recovery path?

- Does compliance or auditability require traceable, step-level logging of every decision?

- Are you building this once for a fixed purpose, or building it to grow with the product?

If you've answered yes to three or more and are thinking about how to sequence an AI build, this roadmap framework is a good starting point [How to Build an AI Adoption Roadmap That Delivers Business Impact].

If You're Building Agentic AI: Four Things That Determine Whether It Reaches Production

Most enterprise AI teams can build a multi-agent prototype. Far fewer can get it to production. The gap between the two is almost never about the models. It's about four architectural decisions that get underestimated, under-resourced, or deferred to Phase 2 which, as it turns out, never arrives.

1. Choose Your Orchestration Model Before You Choose Your Tools

The orchestration pattern you select shapes everything downstream. The three production-viable patterns are: the Orchestrator-Worker model (a central controller routes work to specialized agents the most governable, most enterprise-grade), the Hierarchical model (a root orchestrator delegates to sub-orchestrators, best for large nested workflows), and Peer-to-Peer coordination (agents communicate directly, flexible but difficult to audit at scale).

The AI agent framework question follows naturally from the pattern. LangGraph is purpose-built for stateful, branching orchestrator-worker workflows it gives you explicit control over agent state, conditional routing, and failure recovery. CrewAI is better suited to role-based agent team models where the metaphor of a coordinated crew fits the workflow. AutoGen handles conversational multi-agent collaboration well, particularly where agents need to debate, verify, or iterate on outputs. There is no universally correct choice. But the choice needs to happen before the build not during it.

2. Design State Management Before You Design the Agents

This is the single biggest reason that production-grade multi-agent systems fail after promising starts. State is ephemeral by default. If you don't architect structured state management checkpointing agent outputs, passing state explicitly between handoffs, defining recovery paths for failure your orchestrated system will be as brittle as the single agent it replaced.

Every agent handoff is a state transfer. Design the schema for that state before you design the agents that consume it. This is not a back-end detail. It is the foundation of the entire architecture.

3. Agent Observability and Tracing: Not a Phase 2 Feature

You cannot govern what you cannot see. Agent observability and tracing, the ability to log every agent action, every tool call, every state transition, every decision point, is not something you add after the system works. It is the condition under which your compliance team, your legal team, and your security reviewers will allow it to operate.

Gartners's projection that 60% of AI failures in 2026 will stem from governance gaps was not a prediction about bad intentions. It was a prediction about teams that treated observability as a detail and discovered, too late, that it was the foundation.

4. Build Human-in-the-Loop In. Don't Bolt It On.

Production-grade agentic AI orchestration is not fully autonomous, and the best architectures are designed with that as a feature, not a limitation. The highest-stakes decisions in any enterprise workflow, contract approval, supplier rejection, exception handling, budget authorization, need a designed human escalation path. The orchestrator should know, from day one, which decision types trigger a handoff to a human and what state to pass along when it does.

Teams that bolt human review on as an afterthought end up with agents that autonomously execute decisions they shouldn't and escalate ones they could handle because the boundary was never defined architecturally.

Before moving on, it's worth naming the failure modes of multi-agent systems too, because they're just as real. Poorly designed MAS fail when coordination overhead exceeds the value of parallelism: too many agents, too much inter-agent communication, and the system spends more time routing than executing.

They fail when inter-agent state lacks a defined schema, turning every handoff into a potential data loss event. And they fail when overlapping agent responsibilities create conflicts that the orchestrator wasn't designed to resolve, producing outputs that are inconsistent, contradictory, or simply wrong in ways that are harder to detect than a single-agent error.

The answer to single-agent limitations is not more agents. It's the right architecture. More agents without orchestration discipline creates a different class of problem, one that's more expensive to debug, harder to govern, and slower to recover from than the single-agent system it replaced.

At Catalect, the pattern we see most consistently in pilots that stall is this: the models are capable, the use case is valid, but the orchestration layer, state management, and observability stack were treated as implementation details rather than architectural foundations. The gap between a working prototype and a production deployment is almost always found in these four areas not in the intelligence of the agents themselves.

Orchestration Is Infrastructure. Treat It Like One.

The enterprises that will define the next three years of AI adoption are not the ones with access to the best foundation models. Those are commoditizing fast. The differentiator is orchestration the discipline of designing agent systems that are not just intelligent, but governable, scalable, and resilient under the real conditions of enterprise operation.

This is the inflection point. As of 2026, only 11 to 14% of enterprise AI agent pilots have reached production at scale. The remaining 86% did not fail because AI doesn't work. They failed because the architecture wasn't built to support it governance gaps, integration failures, state management oversights, and orchestration layers that were prototyped but never engineered.

The question is no longer whether your enterprise workflows need AI. It's whether the AI you're building is architected to survive production.

Catalect works with SaaS teams to design, build, and deploy multi-agent systems for the workflows where failure isn't an option. We don't start with tools. We start with the workflow logic, the dependency map, and the governance requirements, and we build the orchestration architecture that gets you from prototype to production.

Whether you need an architecture review, a proof of concept, or a full production deployment, our team brings the orchestration expertise, and the production scars, to get you past the 86%.